Kronos: A Foundation Model That Teaches Candlestick Charts to Speak Transformer Language

If the market is noise, then any attempt to predict the next candle is like decoding radio static. The authors of Kronos propose a radical approach: convert exchange candles into discrete tokens (like words in a language) and train a transformer to predict the "next word" — that is, the next candle.

This isn't a universal time-series transformer "for all occasions" but a specialized model for multidimensional K-lines (OHLCV) with calendar features. The repository shiyu-coder/Kronos is open under MIT, weights on Hugging Face, paper accepted at AAAI 2026.

Core Idea: Candles = Language

Just as ChatGPT learns to predict the next word in text, Kronos learns to predict the next candle in a price series. But first, you need to solve a key problem: a candle is a continuous vector (open, high, low, close, volume), while transformers work with discrete tokens.

The solution — a two-level architecture:

- Tokenizer (BSQ) — compresses the continuous candle into a discrete code

- Decoder (transformer) — predicts the next code autoregressively

BSQ Tokenizer: How to Compress a Candle into Two Numbers

The key module — Binary Spherical Quantizer (BSQ):

- The continuous candle vector is projected onto a sphere (

F.normalize) - Then quantized into a binary code

- The code is split into two levels: coarse (S1) and detailed (S2)

This is vocabulary factorization: instead of one massive dictionary of size S1 × S2, the model uses two small embedding tables — much more efficient.

Hierarchical Decoder: First the Scenario, Then Details

The dual head (DualHead) works in sequence:

- First the model predicts S1 — the "rough scenario" (going up? down? approximately how much?)

- Then with S1 fixed, refines S2 — details (exact prices, volume)

Links

- 💻 GitHub: shiyu-coder/Kronos

- 📄 Paper: arXiv:2508.02739

- 📄 License: MIT

Conclusion

Kronos isn't "yet another transformer for time series" but a coherent pipeline: BSQ tokenizer with codebook control, hierarchical language model with two discretization levels, autoregression with two-stage sampling, local window normalization. It's an open implementation of the "candles = language" idea.

MarketMaker.cc Team

Miqdoriy tadqiqotlar va strategiya

Read More

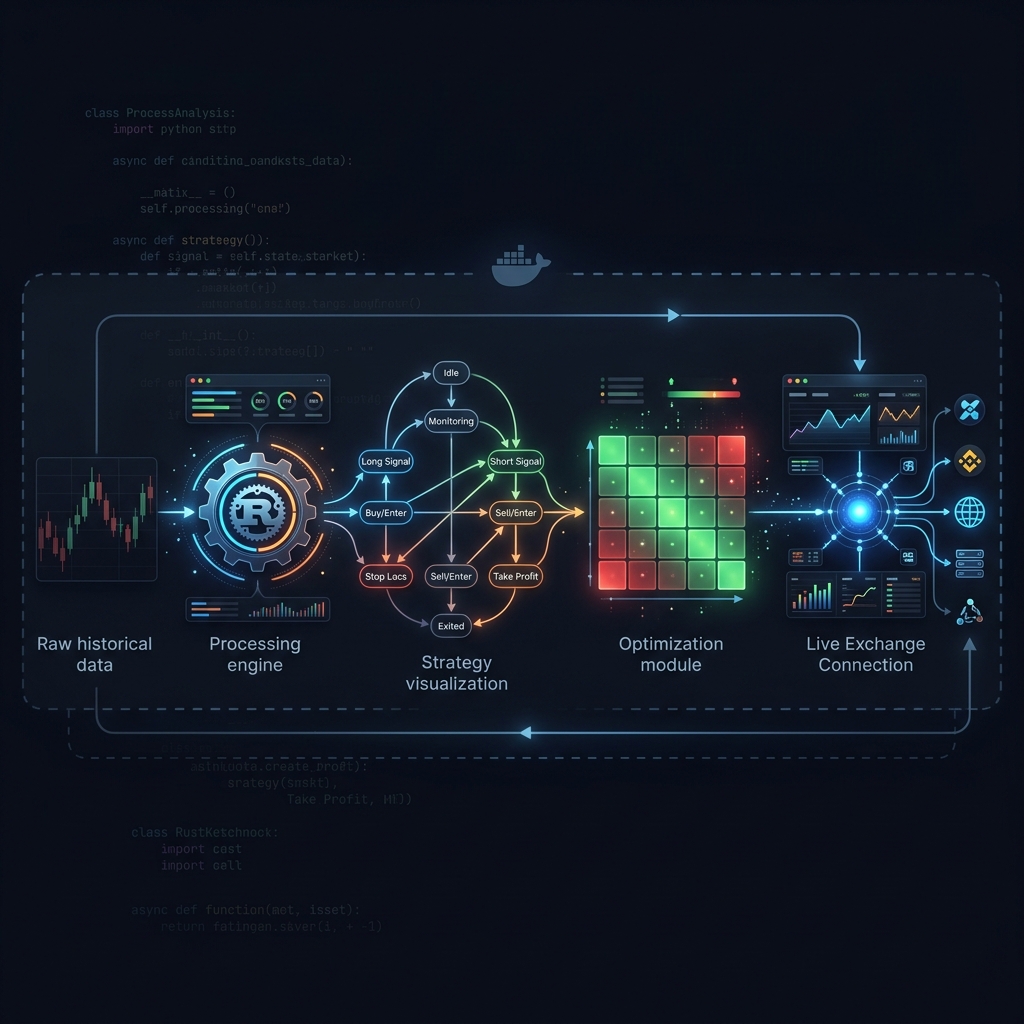

Jesse: Crypto Algo-Trading Framework with a Minute-Based Engine in Python and Rust

AI Hedge Fund: A Multi-Agent Fund Where AI Analysts Vote on Trades