Signal Correlation: How Many Pairs to Monitor

MarketMaker.cc Team

क्वांटिटेटिव रिसर्च और स्ट्रैटेजी

MarketMaker.cc Team

क्वांटिटेटिव रिसर्च और स्ट्रैटेजी

You launch a strategy on 10 crypto pairs: BTC/USDT, ETH/USDT, SOL/USDT, AVAX/USDT, and six more alts. The logic seems bulletproof: if the strategy is active 5% of the time on one pair, then across 10 pairs at least one should be active of the time. A fourfold increase in utilization.

In practice, utilization turns out to be 15-16%, not 40%. Your 10 pairs behave like 3. Capital sits idle, fill_efficiency drops, and the effective portfolio return ends up three times lower than projected.

The reason is signal correlation. And in cryptocurrencies, it is catastrophically high.

In traditional finance, diversification works because Apple stock and an oil ETF react to different factors. In the cryptocurrency market, everything is different.

BTC is the dominant factor. When Bitcoin drops 5%, ETH drops 6-8%, SOL drops 8-12%, altcoins drop 10-20%. Daily return correlations in the crypto market are consistently above 0.6, and during panic they approach 1.0.

But for us — algo traders — what matters is not price correlation, but signal correlation. If the strategy is based on momentum and BTC triggers an entry signal, there is a high probability that ETH and SOL will trigger an analogous signal in the same minute. All pairs enter long simultaneously, all exit simultaneously. Ten positions — but essentially one bet.

Suppose a strategy on each of pairs is active fraction of the time. If signals were completely independent, the probability that at least one pair is active:

For Strategy B (, ):

But signals are not independent. Cryptocurrencies move in sync — meaning signals emerge and extinguish in clusters.

The intuition is this: if 10 pairs are correlated, they carry information from not 10 independent sources but, say, 3-4. We formalize this through effective_N:

where is the correlation factor, reflecting the average pairwise signal correlation. When the pairs are fully independent; when they are identical.

For crypto pairs, a typical . Then:

Not 40%, but 15.6%. A 2.5x difference. Fill efficiency drops accordingly, and with it the effective return of the entire portfolio (see PnL per Active Time).

The crypto market has a pronounced factor structure. BTC explains 60-80% of the daily return variance for most altcoins. This is clearly visible through PCA (Principal Component Analysis):

import numpy as np

from sklearn.decomposition import PCA

def analyze_crypto_factor_structure(returns_matrix: np.ndarray, pair_names: list) -> dict:

"""

PCA analysis of the factor structure of crypto returns.

Args:

returns_matrix: returns matrix [n_days x n_pairs]

pair_names: list of pair names

"""

pca = PCA()

pca.fit(returns_matrix)

explained = pca.explained_variance_ratio_

cumulative = np.cumsum(explained)

print("Factor structure:")

for i, (var, cum) in enumerate(zip(explained[:5], cumulative[:5])):

print(f" PC{i+1}: {var:.1%} variance (cumulative: {cum:.1%})")

loadings = pca.components_[0]

print("\nPC1 loadings (BTC factor):")

for name, load in sorted(zip(pair_names, loadings), key=lambda x: -abs(x[1])):

print(f" {name}: {load:.3f}")

return {

"explained_variance": explained,

"n_effective_factors": int(np.searchsorted(cumulative, 0.90)) + 1,

"pc1_loadings": dict(zip(pair_names, loadings)),

}

Typical result for a portfolio of 10 crypto pairs:

| Component | Explained Variance | Cumulative |

|---|---|---|

| PC1 (BTC) | 65% | 65% |

| PC2 | 12% | 77% |

| PC3 | 8% | 85% |

| PC4 | 5% | 90% |

| PC5-PC10 | 10% | 100% |

Four factors explain 90% of variance. Out of 10 pairs, no more than 4 are "independent."

Here is an important nuance. Price correlation and signal correlation are different things. BTC and ETH prices are correlated at 0.85, but the signals of a specific strategy may be correlated at 0.95 or at 0.50 — depending on the entry logic.

Example: an RSI overbought/oversold strategy. RSI on BTC crosses 30 (oversold) — enter long. ETH at the same moment may also be oversold (signal correlation ~0.90). Or it may not be, if ETH was falling more slowly (signal correlation ~0.40).

The correct approach is to measure the correlation of signals specifically, not price series:

import numpy as np

from itertools import combinations

def signal_correlation_matrix(

signals: dict, # {pair: np.array of 0/1 per minute}

method: str = "pearson",

) -> np.ndarray:

"""

Calculate the signal correlation matrix (binary: 0 = flat, 1 = in position).

Args:

signals: dictionary {pair_name: binary_signal_array}

method: correlation method ("pearson", "jaccard")

"""

pairs = sorted(signals.keys())

n = len(pairs)

corr_matrix = np.ones((n, n))

for i, j in combinations(range(n), 2):

s_i = signals[pairs[i]]

s_j = signals[pairs[j]]

if method == "pearson":

corr = np.corrcoef(s_i, s_j)[0, 1]

elif method == "jaccard":

intersection = np.sum(s_i & s_j)

union = np.sum(s_i | s_j)

corr = intersection / union if union > 0 else 0

else:

raise ValueError(f"Unknown method: {method}")

corr_matrix[i, j] = corr

corr_matrix[j, i] = corr

return corr_matrix, pairs

def estimate_correlation_factor(corr_matrix: np.ndarray) -> float:

"""

Estimate correlation_factor from the signal correlation matrix.

correlation_factor = 1 + (N-1) * mean_pairwise_correlation

When correlation is 0 → C_f = 1 (all independent).

When correlation is 1 → C_f = N (all identical).

"""

n = corr_matrix.shape[0]

upper_triangle = corr_matrix[np.triu_indices(n, k=1)]

mean_corr = np.mean(upper_triangle)

correlation_factor = 1 + (n - 1) * mean_corr

return correlation_factor

Correlation is not static. During calm periods, BTC and alts can diverge — ETH rises on Ethereum news, SOL on Solana news. In a crisis, everything collapses into a single factor: risk-on/risk-off.

def rolling_correlation_factor(

signals: dict,

window_days: int = 30,

step_days: int = 7,

) -> list:

"""

Rolling correlation_factor to detect regime changes.

"""

pairs = sorted(signals.keys())

minutes_per_day = 1440

window = window_days * minutes_per_day

step = step_days * minutes_per_day

total_minutes = len(signals[pairs[0]])

results = []

for start in range(0, total_minutes - window, step):

end = start + window

window_signals = {p: signals[p][start:end] for p in pairs}

corr_matrix, _ = signal_correlation_matrix(window_signals)

cf = estimate_correlation_factor(corr_matrix)

results.append({

"start_minute": start,

"end_minute": end,

"correlation_factor": cf,

"effective_n": len(pairs) / cf,

})

return results

Typical picture for 10 crypto pairs:

| Market Regime | Average Signal Correlation | ||

|---|---|---|---|

| Sideways (low vol) | 0.15-0.25 | 2.4-3.3 | 3.0-4.2 |

| Uptrend | 0.25-0.40 | 3.3-4.6 | 2.2-3.0 |

| Downtrend | 0.30-0.50 | 3.7-5.5 | 1.8-2.7 |

| Panic (crash) | 0.60-0.90 | 6.4-9.1 | 1.1-1.6 |

During panic, 10 pairs compress to 1-2 effective ones. Precisely when diversification is needed most, it vanishes. This is the crypto analogue of the classic "correlations go to 1 in a crisis."

The idea of effective_N is borrowed from statistics, where effective sample size accounts for autocorrelation of observations. For our purposes:

where is the average pairwise signal correlation. Simplified notation:

Properties:

In practice, there are three approaches:

1. From the signal correlation matrix (exact).

Run the strategy on all pairs, obtain binary signals (0/1 for each minute), build the correlation matrix, compute using the formula above.

2. From PCA of price returns (approximate).

If signals strongly depend on price dynamics (momentum, mean-reversion), you can estimate as the number of PCA components explaining 90% of variance.

3. From asset class heuristics (rough).

| Asset Class | Typical |

|---|---|

| Crypto (top-10) | 2.5-4.0 |

| Crypto (with DeFi/memecoins) | 2.0-3.0 |

| Forex (majors) | 1.5-2.5 |

| Stocks (single sector) | 2.0-3.5 |

| Stocks (cross-sector) | 1.2-1.8 |

For a crypto portfolio of BTC, ETH, SOL, AVAX, MATIC, DOGE, DOT, LINK, UNI, ATOM, a safe estimate is .

The base formula accounting for correlation:

Table for different strategies and number of pairs ():

| Strategy | (trading time) | 5 pairs () | 10 pairs () | 20 pairs () | 50 pairs () |

|---|---|---|---|---|---|

| Strategy B | 5% | 8.2% | 15.6% | 29.1% | 58.0% |

| Strategy A | 15% | 23.6% | 41.8% | 65.9% | 92.8% |

| Strategy C | 45% | 67.1% | 89.0% | 98.8% | ~100% |

For Strategy B with 5% activity, you need 50 pairs just to have at least one active position half the time. And that doesn't even account for the fact that 50 crypto pairs are more strongly correlated than 10.

A real orchestrator manages multiple slots simultaneously. If you have 5 slots and 10 pairs, utilization is calculated differently:

def estimate_fill_efficiency(

trading_time_pct: float,

n_pairs: int,

correlation_factor: float = 3.0,

max_slots: int = 1,

) -> dict:

"""

Analytical estimate of fill efficiency for a multi-slot orchestrator.

Args:

trading_time_pct: fraction of active time for one strategy on one pair

n_pairs: number of trading pairs

correlation_factor: signal correlation coefficient

max_slots: maximum number of simultaneous positions

Returns:

dict with utilization metrics

"""

effective_n = n_pairs / correlation_factor

p_at_least_one = 1 - (1 - trading_time_pct) ** effective_n

expected_active = effective_n * trading_time_pct

utilization = min(expected_active, max_slots) / max_slots

fill_efficiency = min(p_at_least_one, utilization)

return {

"effective_n": effective_n,

"p_at_least_one": p_at_least_one,

"expected_active": expected_active,

"utilization": utilization,

"fill_efficiency": fill_efficiency,

}

configs = [

("Strategy B, 10 pairs, 1 slot", 0.05, 10, 3.0, 1),

("Strategy B, 10 pairs, 3 slots", 0.05, 10, 3.0, 3),

("Strategy B, 30 pairs, 1 slot", 0.05, 30, 3.0, 1),

("Strategy A, 10 pairs, 1 slot", 0.15, 10, 3.0, 1),

("Strategy C, 10 pairs, 1 slot", 0.45, 10, 3.0, 1),

("Strategy C, 10 pairs, 5 slots", 0.45, 10, 3.0, 5),

]

for name, p, n, cf, slots in configs:

result = estimate_fill_efficiency(p, n, cf, slots)

print(f"{name}:")

print(f" N_eff = {result['effective_n']:.1f}")

print(f" P(≥1 active) = {result['p_at_least_one']:.1%}")

print(f" E[active] = {result['expected_active']:.2f}")

print(f" fill_efficiency = {result['fill_efficiency']:.1%}")

print()

Expected output:

Strategy B, 10 pairs, 1 slot:

N_eff = 3.3

P(≥1 active) = 15.6%

E[active] = 0.17

fill_efficiency = 15.6%

Strategy B, 10 pairs, 3 slots:

N_eff = 3.3

P(≥1 active) = 15.6%

E[active] = 0.17

fill_efficiency = 5.6%

Strategy B, 30 pairs, 1 slot:

N_eff = 10.0

P(≥1 active) = 40.1%

E[active] = 0.50

fill_efficiency = 40.1%

Strategy A, 10 pairs, 1 slot:

N_eff = 3.3

P(≥1 active) = 41.8%

E[active] = 0.50

fill_efficiency = 41.8%

Strategy C, 10 pairs, 1 slot:

N_eff = 3.3

P(≥1 active) = 89.0%

E[active] = 1.50

fill_efficiency = 89.0%

Strategy C, 10 pairs, 5 slots:

N_eff = 3.3

P(≥1 active) = 89.0%

E[active] = 1.50

fill_efficiency = 30.0%

Note: Strategy B with 3 slots and 10 pairs shows fill_efficiency of 5.6%. Three slots are pointless when the expected number of active pairs is only 0.17. Slots should be allocated proportionally to expected load.

The analytical model is an approximation. For accurate estimation, you need simulation on real signals:

import numpy as np

def simulate_fill_efficiency(

all_signals: dict, # {(strategy, pair): [(entry_min, exit_min), ...]}

max_slots: int = 10,

test_period_minutes: int = 750 * 24 * 60, # 750 days

priority_fn=None, # priority function for position selection

) -> dict:

"""

Simulate real slot load of the orchestrator.

For each minute: count how many pairs want to enter a position,

and how many slots are actually occupied (accounting for the limit).

Args:

all_signals: signals by pairs and strategies

max_slots: maximum number of simultaneous positions

test_period_minutes: length of the test period in minutes

priority_fn: if None — FIFO; otherwise — ranking function

"""

demand_timeline = np.zeros(test_period_minutes, dtype=np.int32)

capped_timeline = np.zeros(test_period_minutes, dtype=np.int32)

for signals in all_signals.values():

for entry_min, exit_min in signals:

if entry_min < test_period_minutes:

end = min(exit_min, test_period_minutes)

demand_timeline[entry_min:end] += 1

capped_timeline = np.minimum(demand_timeline, max_slots)

total_demand = np.sum(demand_timeline)

total_filled = np.sum(capped_timeline)

time_with_any_active = np.sum(demand_timeline > 0)

fill_efficiency = np.mean(capped_timeline) / max_slots

demand_fill_ratio = total_filled / total_demand if total_demand > 0 else 0

time_utilization = time_with_any_active / test_period_minutes

slot_distribution = {}

for s in range(max_slots + 1):

slot_distribution[s] = np.mean(capped_timeline == s)

return {

"fill_efficiency": fill_efficiency,

"demand_fill_ratio": demand_fill_ratio,

"time_utilization": time_utilization,

"avg_demand": np.mean(demand_timeline),

"avg_filled": np.mean(capped_timeline),

"slot_distribution": slot_distribution,

"overflow_pct": np.mean(demand_timeline > max_slots),

}

Simulation on real data often shows even lower utilization than the analytical estimate, because it accounts for temporal clustering of signals: all pairs enter simultaneously in a cluster, creating overflow, and then all go silent, creating a void.

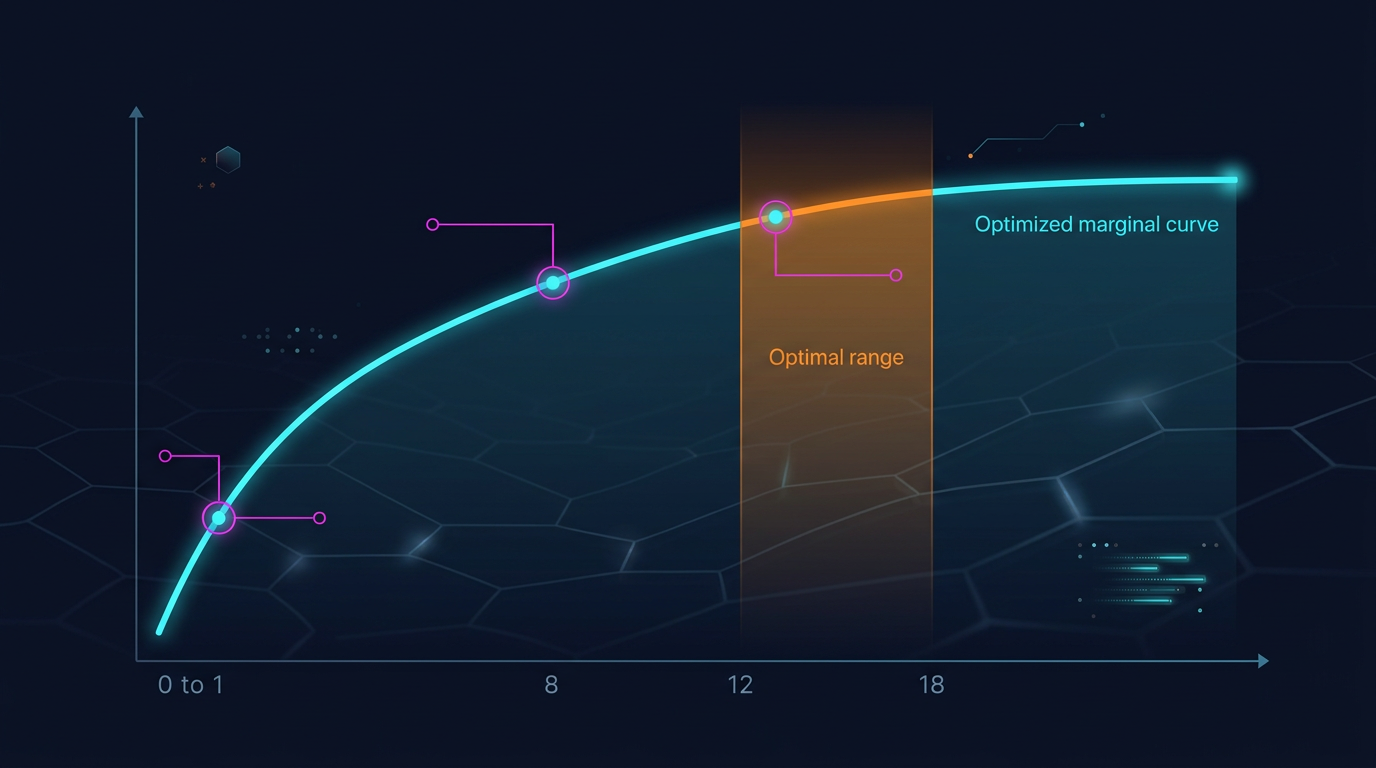

The key question: at what does adding one more pair stop noticeably increasing fill_efficiency?

import numpy as np

def diminishing_returns_analysis(

trading_time_pct: float,

correlation_factor: float = 3.0,

max_pairs: int = 100,

target_utilization: float = 0.80,

) -> dict:

"""

Diminishing returns analysis from adding new pairs.

"""

results = []

target_n = None

for n in range(1, max_pairs + 1):

n_eff = n / correlation_factor

p_active = 1 - (1 - trading_time_pct) ** n_eff

marginal = 0

if n > 1:

prev_eff = (n - 1) / correlation_factor

prev_p = 1 - (1 - trading_time_pct) ** prev_eff

marginal = p_active - prev_p

results.append({

"n_pairs": n,

"n_effective": n_eff,

"p_at_least_one": p_active,

"marginal_gain": marginal,

})

if target_n is None and p_active >= target_utilization:

target_n = n

return {

"results": results,

"target_n_for_utilization": target_n,

}

analysis_b = diminishing_returns_analysis(0.05, correlation_factor=3.0, target_utilization=0.80)

print(f"Strategy B: need {analysis_b['target_n_for_utilization']} pairs for 80% P(≥1)")

for r in analysis_b["results"]:

if r["n_pairs"] in [1, 3, 5, 10, 20, 30, 50, 80]:

print(f" N={r['n_pairs']:3d}: N_eff={r['n_effective']:.1f}, "

f"P(≥1)={r['p_at_least_one']:.1%}, "

f"marginal={r['marginal_gain']:.2%}")

Results for Strategy B (, ):

| pairs | Marginal gain | ||

|---|---|---|---|

| 1 | 0.3 | 1.7% | — |

| 3 | 1.0 | 5.0% | +1.7% |

| 5 | 1.7 | 8.2% | +1.6% |

| 10 | 3.3 | 15.6% | +1.4% |

| 20 | 6.7 | 29.1% | +1.1% |

| 30 | 10.0 | 40.1% | +0.9% |

| 50 | 16.7 | 58.0% | +0.6% |

| 80 | 26.7 | 74.5% | +0.4% |

For Strategy B, reaching 80% single-slot utilization is impossible even with 100 pairs (you need ~96 pairs). This is a fundamental limitation: a strategy with 5% trading time is not suited for single-slot operation — it needs a portfolio approach with multiple strategies.

For Strategy A (, ):

| pairs | Marginal gain | ||

|---|---|---|---|

| 5 | 1.7 | 23.6% | — |

| 10 | 3.3 | 41.8% | +3.3% |

| 20 | 6.7 | 65.9% | +2.1% |

| 30 | 10.0 | 80.3% | +1.2% |

Strategy A reaches 80% utilization at ~30 pairs. Marginal gain at the 30th pair is only +1.2%.

For Strategy C (, ):

| pairs | ||

|---|---|---|

| 3 | 1.0 | 45.0% |

| 5 | 1.7 | 67.1% |

| 10 | 3.3 | 89.0% |

| 15 | 5.0 | 95.0% |

Strategy C with 45% trading time reaches 90% utilization at just 10 pairs. Adding more is pointless.

There is another factor that limits the number of pairs: edge degradation. A strategy developed and optimized on BTC/USDT may perform worse on less liquid alts.

Causes of degradation:

def edge_decay_analysis(

strategy_results: dict, # {pair: {"pnl_per_day": float, "n_trades": int}}

min_trades: int = 30,

) -> list:

"""

Rank pairs by edge accounting for degradation.

"""

ranked = []

for pair, metrics in strategy_results.items():

if metrics["n_trades"] < min_trades:

continue

ranked.append({

"pair": pair,

"pnl_per_day": metrics["pnl_per_day"],

"n_trades": metrics["n_trades"],

"sharpe": metrics.get("sharpe", 0),

})

ranked.sort(key=lambda x: x["pnl_per_day"], reverse=True)

cumulative_pnl = []

running_sum = 0

for i, r in enumerate(ranked):

running_sum += r["pnl_per_day"]

avg = running_sum / (i + 1)

cumulative_pnl.append({

"n_pairs": i + 1,

"last_added": r["pair"],

"last_pnl_per_day": r["pnl_per_day"],

"avg_pnl_per_day": avg,

})

return cumulative_pnl

Typical picture:

| # pairs | Last Added | PnL/day of last | Average PnL/day |

|---|---|---|---|

| 1 | BTC/USDT | 0.89% | 0.89% |

| 2 | ETH/USDT | 0.82% | 0.86% |

| 3 | SOL/USDT | 0.71% | 0.81% |

| 5 | AVAX/USDT | 0.55% | 0.73% |

| 8 | DOT/USDT | 0.31% | 0.61% |

| 10 | DOGE/USDT | 0.12% | 0.53% |

Adding the 10th pair lowers the portfolio's average PnL/day. By the 8th pair, the edge is already half that of the best. A balance is needed between fill_efficiency (grows with the number of pairs) and average edge (falls).

We combine fill_efficiency and edge decay into a single metric — expected portfolio PnL per day:

def optimal_pairs_count(

pair_edges: list, # PnL/day in descending order: [0.89, 0.82, 0.71, ...]

trading_time_pct: float,

correlation_factor: float = 3.0,

max_slots: int = 1,

) -> dict:

"""

Find the optimal number of pairs that maximizes portfolio PnL.

"""

best_n = 0

best_score = 0

results = []

for n in range(1, len(pair_edges) + 1):

avg_edge = np.mean(pair_edges[:n])

n_eff = n / correlation_factor

p_active = 1 - (1 - trading_time_pct) ** n_eff

expected_active = n_eff * trading_time_pct

utilization = min(expected_active, max_slots) / max_slots

fill_eff = min(p_active, utilization)

portfolio_score = avg_edge * fill_eff * 365

results.append({

"n_pairs": n,

"avg_edge": avg_edge,

"fill_efficiency": fill_eff,

"portfolio_annualized": portfolio_score,

})

if portfolio_score > best_score:

best_score = portfolio_score

best_n = n

return {

"optimal_n": best_n,

"optimal_score": best_score,

"results": results,

}

edges = [0.89, 0.82, 0.71, 0.65, 0.55, 0.48, 0.40, 0.31, 0.22, 0.12,

0.08, 0.05, 0.02, -0.01, -0.05]

opt = optimal_pairs_count(edges, trading_time_pct=0.15, correlation_factor=3.0)

print(f"Optimal number of pairs: {opt['optimal_n']}")

print(f"Portfolio annualized: {opt['optimal_score']:.1f}%")

for r in opt["results"]:

print(f" N={r['n_pairs']:2d}: avg_edge={r['avg_edge']:.2f}%, "

f"fill_eff={r['fill_efficiency']:.1%}, "

f"portfolio={r['portfolio_annualized']:.1f}%")

The optimum is typically found at the point where the marginal fill_efficiency from adding a pair no longer compensates for the decline in average edge. For a typical crypto portfolio:

A paradox: the strategy with the lowest trading time benefits from the most pairs, yet fill_efficiency still remains low. The solution is not more pairs, but combining with other strategies (see Combo Strategies).

If you cannot increase the number of pairs indefinitely, you can reduce — that is, increase signal diversity.

BTC, ETH, BNB are very strongly correlated. But UNI (DEX), AAVE (lending), CRV (stablecoins) may have their own drivers. Adding DeFi tokens reduces the average from 0.35 to 0.20-0.25:

Instead of 10 pairs with one strategy — 5 pairs with two different strategies. If the strategies are based on different principles (momentum vs. mean-reversion), their signals can be anti-correlated:

This is the only way to get — use strategies with negative signal correlation.

Funding rate arbitrage and spot trading have a different correlation structure. Adding arbitrage strategies to the portfolio significantly reduces the overall , because arbitrage by definition exploits divergences, not convergences.

Typical representative: Strategy B (5% time, 38 trades over 750 days).

Typical representative: Strategy A (15% time, 418 trades over 750 days).

Typical representative: Strategy C (45% time).

| Parameter | Strategy B (5%) | Strategy A (15%) | Strategy C (45%) |

|---|---|---|---|

| Recommended | 10-15 | 6-10 | 4-6 |

| at | 3.3-5.0 | 2.0-3.3 | 1.3-2.0 |

| Fill eff. (1 slot) | 15-23% | 32-42% | 77-89% |

| Combination needed? | Mandatory | Desirable | No |

| Bottleneck | Few signals | Balance | Overflow |

This article is the eleventh in the "Backtests Without Illusions" series. Signal correlation directly impacts the metrics from previous articles:

PnL per Active Time: fill_efficiency is the key multiplier in the effective return formula. If you overestimate fill_efficiency by ignoring correlation, your portfolio PnL forecast will be overly optimistic.

Funding rates: with high correlation, positions open simultaneously — and funding costs grow linearly with the number of slots. Overflow + funding = accelerated capital burn.

Funding rate arbitrage: arbitrage strategies are a natural diversifier that reduces the portfolio's . Their signals are weakly correlated with momentum and mean-reversion strategies.

Combo strategies (next article): how to assemble a portfolio of strategies with different and to achieve 90%+ utilization. Cascade orchestration accounts for signal correlation when assigning priorities.

Diversification in crypto is not about the number of pairs. 10 correlated pairs yield the effect of 3-4 independent ones. During panic, even fewer.

Four takeaways:

Calculate effective_N, not N. For crypto pairs . Ten pairs are ~3.3 effective ones. Plan fill_efficiency based on , not .

Measure signal correlation, not price correlation. Price correlation is a proxy, not a substitute. Run the strategy on all pairs and compute the binary signal correlation matrix.

Account for edge degradation. More pairs means lower average PnL/day. The optimum is at the point where marginal fill_efficiency from a new pair still compensates for the edge decline.

Reduce , don't increase . Combining different strategies on the same pairs is more effective than one strategy on more pairs. Cross-strategy diversification can yield .

Correlation factor is the hidden variable that determines how realistic your utilization and return forecasts are. Ignoring it means building a portfolio on illusions.

@article{soloviov2026signalcorrelation, author = {Soloviov, Eugen}, title = {Signal Correlation: How Many Pairs to Monitor}, year = {2026}, url = {https://marketmaker.cc/ru/blog/post/signal-correlation-pairs}, version = {0.1.0}, description = {Why 10 crypto pairs don't provide 10x diversification, how to calculate effective\_N via correlation\_factor, and how many pairs you really need to monitor for 80-90\% orchestrator slot utilization.} }