LLM Alpha Mining: How to Extract Trading Signals from Earnings Calls and Financial Documents

There's a joke on Wall Street: "The most valuable information in an earnings call isn't what the CEO said, but how he said it." When Tim Cook says "we are cautiously optimistic" instead of last year's "we are very pleased" — that's not a linguistic game, it's a signal worth hundreds of millions of dollars.

For decades, quant funds have tried to systematize the extraction of these signals. First, they counted the frequency of "positive" and "negative" words using dictionaries. Then they unleashed BERT. And now we have GPT-4o, Claude, and open-source LLMs capable of parsing the subtleties of corporate doublespeak with accuracy that frightens even the researchers themselves.

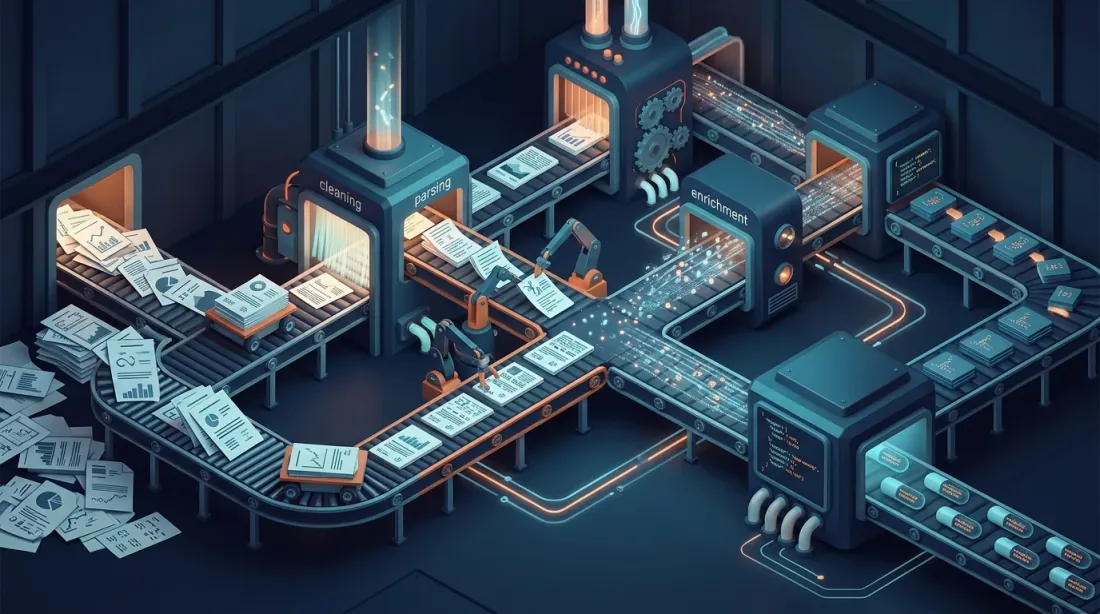

Let's break down how to build a full-fledged pipeline for extracting trading signals from earnings calls — from obtaining the transcript to backtesting cumulative abnormal returns.

Why Earnings Calls Are a Goldmine for Alpha

Post-Earnings Announcement Drift: The Anomaly That Won't Die

In 1968, Ball and Brown discovered something strange: after quarterly results are published, stocks continue to drift in the direction of the "surprise" for another 60-90 days. They called it Post-Earnings Announcement Drift (PEAD). More than half a century has passed since then, hundreds of papers have been written, the anomaly has been explained from a dozen angles — and it still works.

PEAD is one of the most persistent market anomalies in the history of finance. A portfolio strategy of "buy positive surprise, sell negative surprise" has historically delivered 10-25% annual excess return. Why hasn't the market arbitraged this away? Several reasons:

- Limited investor attention — when 200 companies report in the same week, it's physically impossible to read all the transcripts

- Cognitive complexity — an earnings call lasts 45-60 minutes, and the key signal can be buried in a single sentence at the 38th minute of the Q&A session

- Language ambiguity — the CFO says "we are navigating headwinds," and without context it's unclear whether this is a soft warning or standard hedging

This is where LLMs enter the stage. For the first time, we have a tool that can process 500 transcripts in an evening while catching nuances that even experienced analysts would miss.

PEAD.txt: Text Matters More Than Numbers

Researchers from the Federal Reserve Bank of Philadelphia (Meursault, Liang, Routledge, Scanlon) published the PEAD.txt paper, which overturned assumptions about the value of textual information. They built a text-based analog of the standard earnings surprise — SUE.txt — that doesn't use the numerical earnings value at all.

The result? SUE.txt generates drift that is twice as large as classic PEAD. Moreover: in recent years, while classic PEAD based on numerical surprises has virtually disappeared (the market learned), the textual drift remains significant. The market learned to process numbers quickly but still struggles with text interpretation.

This is the fundamental argument in favor of an NLP-based approach to earnings calls.

From Sentiment to Semantics: Evolution of Approaches

First Generation: Bag-of-Words and Dictionaries (2000-2015)

It all started with the Loughran-McDonald dictionary (2011) — a word list labeled as "positive," "negative," "uncertain," and "litigious." The idea was elegant in its simplicity: count the percentage of negative words in a 10-K filing and trade on that.

The problem? The word "outstanding" in a financial context more often means "unpaid debt" rather than "excellent result." The word "risk" in Risk Management isn't a negative signal — it's a process description. Standard NLP sentiment dictionaries performed embarrassingly poorly on financial texts.

Loughran and McDonald created a specialized dictionary, which improved the situation, but the fundamental problem remained: bag-of-words doesn't understand context. "We did not fail to meet expectations" — there are two "negative" words here, but the meaning is positive.

Second Generation: FinBERT and Transformers (2019-2023)

In 2019, Dogu Araci published FinBERT — BERT fine-tuned on financial texts from Reuters TRC2. The results were impressive: a 14 percentage-point improvement on the Financial PhraseBank dataset over the state-of-the-art. FinBERT understood context: "outstanding" next to "debt" — negative, next to "performance" — positive.

But FinBERT had a limitation: a 512-token context window. An earnings call runs 8,000-12,000 words. Splitting into chunks and averaging sentiment means losing inter-paragraph semantics. The CEO might start with optimism, then casually mention supply chain problems during Q&A. FinBERT analyzes each chunk independently and misses this contrast.

Third Generation: LLMs with Long Context (2023-Present)

GPT-4, Claude, Gemini with 128K-1M token context windows changed the rules of the game. Now you can load an entire transcript at once and ask questions that require understanding the whole document.

The key study — Lopez-Lira & Tang (2023) "Can ChatGPT Forecast Stock Price Movements?" On 50,000+ headlines, GPT-4 showed ~90% hit rate in predicting the direction of the initial market reaction and significantly predicted the subsequent drift, especially for small-cap companies and negative news. Earlier models (GPT-1, GPT-2, BERT) didn't show this ability — predictive power emerges as an emergent property of large models.

BloombergGPT (2023) — a 50-billion parameter model trained on Bloomberg's financial corpus — demonstrated improvements in financial NER, news classification, and sentiment analysis. FinGPT — its open-source alternative — achieves 89% accuracy on financial sentiment tasks using a data-centric approach and RAG.

MarketSenseAI, which uses GPT-4 with Chain-of-Thought and In-Context Learning for S&P 100 analysis, showed excess alpha of 10-30% and cumulative returns of up to 72% over 15 months of testing. Yes, these numbers should be taken with caution (backtest ≠ live trading), but the trend is clear.

Data Pipeline: Where to Get the Data

SEC EDGAR: The Official Source

For US equities, the primary source is SEC EDGAR. Earnings calls are not typically filed directly, but related documents are available:

- 8-K filings (Item 2.02 — Results of Operations) — press releases with results, often including exhibit 99 with the transcript

- 10-Q / 10-K — quarterly and annual reports with Management Discussion & Analysis (MD&A) — also a valuable text source

- DEF 14A — proxy statements with management compensation information

from edgar import Company

company = Company("AAPL")

filings = company.get_filings(form="8-K")

for filing in filings.latest(10):

if "2.02" in str(filing.items):

doc = filing.document()

text = doc.text() # Full text with exhibits

print(f"{filing.filing_date}: {len(text)} chars")

Seeking Alpha and Commercial APIs

Earnings call transcripts are a separate product. Seeking Alpha was historically the main free source, but now restricts access. Commercial options:

- Seeking Alpha Premium API — full transcripts with speaker labels

- AlphaVantage Earnings API — free tier with limitations

- Financial Modeling Prep — transcripts + fundamentals

- Earnings Call Edge / Motley Fool Transcripts — alternative sources

Crypto: Governance Calls and DAO Proposals

This is where things get interesting. Major DeFi protocols hold their equivalent of earnings calls:

- Uniswap — governance calls, community calls, recordings on YouTube

- Aave — monthly community calls + governance forum proposals

- MakerDAO — governance calls + extensive forum discussions

- Compound — governance proposals with detailed discussions

Crypto call transcripts are usually unstructured. The solution — OpenAI's Whisper for transcribing YouTube recordings:

import openai

from yt_dlp import YoutubeDL

def transcribe_governance_call(youtube_url: str) -> str:

"""Download audio from YouTube and transcribe via Whisper."""

ydl_opts = {

'format': 'bestaudio/best',

'postprocessors': [{

'key': 'FFmpegExtractAudio',

'preferredcodec': 'mp3',

'preferredquality': '64', # Low bitrate is sufficient for speech

}],

'outtmpl': '/tmp/governance_call.%(ext)s',

}

with YoutubeDL(ydl_opts) as ydl:

ydl.download([youtube_url])

client = openai.OpenAI()

with open("/tmp/governance_call.mp3", "rb") as audio_file:

transcript = client.audio.transcriptions.create(

model="whisper-1",

file=audio_file,

response_format="verbose_json",

timestamp_granularities=["segment"]

)

return transcript.text

The cost of transcription via the Whisper API: 0.36. For a self-hosted option — Whisper Large-v3 Turbo transcribes a 60-minute file in ~17 seconds (216x real-time) on a modern GPU.

LLM Prompting Strategies: From Naive to Production-Grade

Strategy 1: Direct Sentiment (Weak)

The most naive approach — ask the model directly:

"Is this earnings call positive or negative for the stock price?"

Does this work? Yes, surprisingly. Lopez-Lira & Tang showed that even such a primitive prompt produces statistically significant predictions. But there are problems:

- Binary output — you lose gradations. "Catastrophe" and "mild disappointment" get the same label

- No explanation — it's unclear what the model based its decision on

- Instability — a repeated run may give a different answer

Strategy 2: Structured Extraction with Chain-of-Thought (Strong)

The idea: instead of a single number, extract a structured set of signals, forcing the model to explain each step.

from pydantic import BaseModel, Field

from openai import OpenAI

from enum import Enum

from typing import Optional

class SentimentLevel(str, Enum):

VERY_BEARISH = "very_bearish"

BEARISH = "bearish"

NEUTRAL = "neutral"

BULLISH = "bullish"

VERY_BULLISH = "very_bullish"

class GuidanceSurprise(BaseModel):

"""Deviation of forward guidance from consensus expectations."""

revenue_guidance_vs_consensus: Optional[float] = Field(

None, description="% deviation of revenue guidance from consensus"

)

margin_guidance_direction: Optional[str] = Field(

None, description="expanding / stable / contracting"

)

key_quote: str = Field(

description="Verbatim quote with guidance"

)

reasoning: str = Field(

description="CoT: why this guidance matters"

)

class ConfidenceMetrics(BaseModel):

"""Management confidence metrics."""

hedge_word_count: int = Field(

description="Number of hedge words: 'approximately', 'potentially', 'subject to'"

)

forward_looking_ratio: float = Field(

description="Ratio of forward-looking statements to total statements"

)

q_and_a_evasion_count: int = Field(

description="Number of questions where CEO/CFO gave an evasive answer"

)

ceo_vs_cfo_sentiment_delta: float = Field(

description="Sentiment difference between CEO and CFO (-1 to 1). Divergence is a red flag"

)

class CompetitiveIntelligence(BaseModel):

"""Mentions of competitors and market position."""

competitors_mentioned: list[str] = Field(

description="List of mentioned competitors"

)

market_share_claims: list[str] = Field(

description="Market share claims"

)

new_product_signals: list[str] = Field(

description="Signals about new products/services"

)

class ManagementSignals(BaseModel):

"""Management signals."""

turnover_risk: SentimentLevel = Field(

description="Risk of key management turnover"

)

tone_shift_from_previous: Optional[str] = Field(

None, description="How tone changed compared to last quarter"

)

insider_language_flags: list[str] = Field(

description="Marker phrases: 'exploring strategic alternatives', 'right-sizing', etc."

)

class EarningsCallAnalysis(BaseModel):

"""Complete earnings call analysis."""

ticker: str

quarter: str

overall_sentiment: SentimentLevel

sentiment_score: float = Field(description="from -1.0 to 1.0")

guidance_surprise: GuidanceSurprise

confidence_metrics: ConfidenceMetrics

competitive_intel: CompetitiveIntelligence

management_signals: ManagementSignals

key_risks: list[str]

key_catalysts: list[str]

one_line_summary: str

def analyze_earnings_call(transcript: str, ticker: str, quarter: str) -> EarningsCallAnalysis:

"""

Extract structured signals from an earnings call.

Cost: ~$0.15-0.30 per call (GPT-4o, ~10K tokens input).

"""

client = OpenAI()

system_prompt = """You are a senior equity research analyst with 20 years of experience.

Analyze the following earnings call transcript and extract structured trading signals.

IMPORTANT INSTRUCTIONS:

1. Use Chain-of-Thought reasoning for each field — explain WHY before giving the value

2. Focus on DEVIATIONS from expectations, not absolute statements

3. Pay special attention to Q&A section — management is less scripted there

4. Compare management's language to typical corporate hedging baseline

5. Flag any "strategic alternatives", "right-sizing", or other euphemisms

6. Score sentiment relative to market expectations, not in absolute terms

HEDGE WORDS TO COUNT: approximately, potentially, subject to, may, might,

could, uncertain, challenging, headwinds, navigate, prudent, cautious,

evolving, dynamic, unprecedented, transitional"""

completion = client.beta.chat.completions.parse(

model="gpt-4o",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": f"Ticker: {ticker}\nQuarter: {quarter}\n\n{transcript}"}

],

response_format=EarningsCallAnalysis,

temperature=0.1, # Low temperature for reproducibility

)

return completion.choices[0].message.parsed

Note several key points:

Pydantic schema — OpenAI Structured Outputs guarantees 100% schema compliance. No more "sorry, I cannot parse the JSON." Each field has a description that acts as a mini-prompt for a specific aspect of the analysis.

Chain-of-Thought within the schema — the reasoning and key_quote fields force the model to "show its work." This not only improves quality (the model is forced to find a specific quote before making a judgment) but also creates an audit trail for regulators.

Temperature 0.1 — we don't want creativity. We need reproducibility. At temperature 0, the model sometimes gets "stuck" in patterns; 0.1 is the optimal compromise.

Strategy 3: Few-Shot with Historical Examples

Even more powerful — give the model examples of past earnings calls with actual market reactions:

few_shot_examples = """

EXAMPLE 1:

Transcript excerpt: "We are cautiously optimistic about the second half...

While we continue to navigate macro headwinds, our pipeline remains robust."

Actual market reaction: -3.2% (next day)

Analysis: Despite surface-level positivity, "cautiously optimistic" is a

DOWNGRADE from previous quarter's "very confident". Five hedge words in

two sentences. Market read through the hedging.

EXAMPLE 2:

Transcript excerpt: "Frankly, demand has exceeded our ability to supply.

We're expediting CapEx to address this."

Actual market reaction: +7.8% (next day)

Analysis: "Frankly" signals genuine surprise even from management.

Accelerated CapEx on demand = strong confidence. No hedging language.

"""

Few-shot examples help the model calibrate: it learns that "cautiously optimistic" in Wall Street language isn't positive — it's a soft negative. Without examples, the LLM might interpret words literally.

Four Types of Signals

1. Guidance Surprise

The most direct signal. A company provides a forecast (guidance) for the next quarter/year, and the market reacts to the deviation from consensus. An LLM can extract guidance even when management communicates it vaguely:

- "We expect revenues in the range of..." — direct guidance, easy to parse

- "We feel comfortable with current Street estimates" — implicit confirmation of consensus

- "There are puts and takes relative to consensus" — implicit risk signal

An LLM understands all three phrasings; regex understands only the first.

2. Confidence Metrics: Hedge Word Density

This is my favorite signal because it's counterintuitive. The essence: managers are people with legal training and paranoid legal departments. When things are going well, they allow themselves to be specific. When problems are brewing — they start hedging.

Metrics to track:

| Metric | Description | Bearish Signal |

|---|---|---|

| Hedge word density | Share of hedge words per 1,000 words | > 15 per 1,000 words |

| Certainty ratio | Ratio of "will/expect" vs "may/could" | < 1.5 |

| Q&A evasion rate | % of questions without a direct answer | > 30% |

| CEO/CFO delta | Tone divergence between CEO and CFO | > 0.3 on scale [-1, 1] |

The last point is particularly interesting. The CEO is a storyteller — his job is to paint a pretty picture. The CFO is the one accountable to auditors. When the CEO says "transformative growth ahead" and the CFO immediately interjects "while maintaining disciplined cost management" — this divergence signals internal tensions.

3. Competitive Intelligence

An LLM can extract competitor mentions from a transcript, even when management avoids direct names. "The largest player in the market" — that's no mystery for GPT-4 if it knows the industry.

Trading signal: if company A mentions competitor B in a negative context during its earnings call ("we're taking share from..."), that's a signal not only for A (long) but also for B (short). A pairs trade.

4. Management Turnover Signals

Marker phrases for management changes or strategic pivots:

- "Exploring strategic alternatives" — likely company sale

- "Right-sizing our operations" — mass layoffs

- "The board has initiated a comprehensive review" — the CEO will leave soon

- "We're bringing in fresh perspectives" — the current team has failed

Each of these phrases has a statistically significant correlation with subsequent price dynamics. An LLM can detect them with zero false positives — because it understands context, unlike regex, which might catch "strategic alternatives" in a product line description.

Backtesting: Event Study Methodology

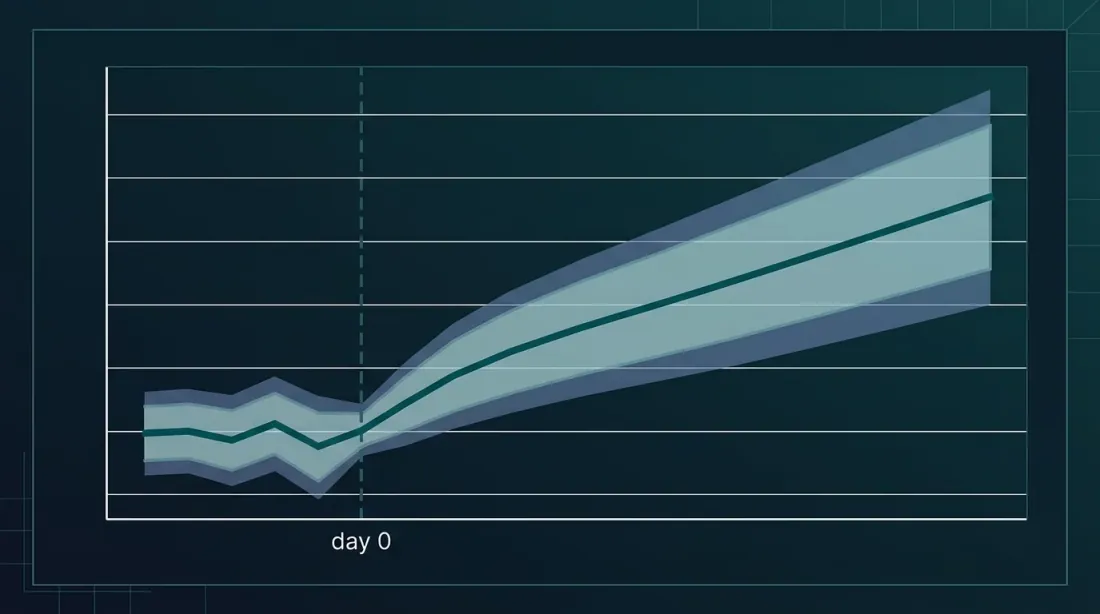

We're generating signals — great. But do they work? The standard verification method is an Event Study with Cumulative Abnormal Returns (CAR) calculation.

Methodology

- Define the event — the earnings call date

- Estimation window — [-250, -30] trading days before the event to estimate "normal" returns

- Event window — [-1, +60] days around the event

- Calculate normal returns via the market model:

- Abnormal return — the difference between actual and "normal" returns

- CAR — cumulative sum of abnormal returns over the event window

import numpy as np

import pandas as pd

from scipy import stats

from dataclasses import dataclass

@dataclass

class EventStudyResult:

car: np.ndarray # Cumulative abnormal returns by day

t_stats: np.ndarray # t-statistics for each day

avg_car_3d: float # CAR[-1, +1]

avg_car_30d: float # CAR[-1, +30]

avg_car_60d: float # CAR[-1, +60]

p_value_3d: float

p_value_30d: float

n_events: int

def run_event_study(

returns: pd.DataFrame, # Daily stock returns (columns = tickers)

market_returns: pd.Series, # Daily market index returns

events: pd.DataFrame, # DataFrame with columns: [ticker, date, signal_score]

estimation_window: int = 220,

gap: int = 30,

event_window: tuple = (-1, 60),

) -> EventStudyResult:

"""

Event study to evaluate the predictive power of LLM signals.

Sort events by signal_score, form long/short portfolios,

calculate CAR and test statistical significance.

"""

all_cars = []

for _, event in events.iterrows():

ticker = event['ticker']

event_date = event['date']

if ticker not in returns.columns:

continue

try:

event_idx = returns.index.get_loc(event_date, method='ffill')

except KeyError:

continue

est_start = event_idx - estimation_window - gap

est_end = event_idx - gap

if est_start < 0:

continue

y = returns.iloc[est_start:est_end][ticker].values

x = market_returns.iloc[est_start:est_end].values

mask = ~(np.isnan(y) | np.isnan(x))

if mask.sum() < 60: # Minimum 60 observations

continue

y_clean, x_clean = y[mask], x[mask]

slope, intercept, _, _, _ = stats.linregress(x_clean, y_clean)

residual_std = np.std(y_clean - (intercept + slope * x_clean))

ev_start = event_idx + event_window[0]

ev_end = event_idx + event_window[1] + 1

if ev_end > len(returns):

continue

actual = returns.iloc[ev_start:ev_end][ticker].values

market = market_returns.iloc[ev_start:ev_end].values

expected = intercept + slope * market

ar = actual - expected

car = np.cumsum(ar)

all_cars.append(car)

if not all_cars:

raise ValueError("No valid events found")

min_len = min(len(c) for c in all_cars)

all_cars = np.array([c[:min_len] for c in all_cars])

mean_car = np.mean(all_cars, axis=0)

std_car = np.std(all_cars, axis=0) / np.sqrt(len(all_cars))

t_stats = mean_car / (std_car + 1e-10)

offset = -event_window[0] # Shift to event date

car_3d = mean_car[min(offset + 1, min_len - 1)] if min_len > offset + 1 else mean_car[-1]

car_30d = mean_car[min(offset + 30, min_len - 1)] if min_len > offset + 30 else mean_car[-1]

car_60d = mean_car[min(offset + 60, min_len - 1)] if min_len > offset + 60 else mean_car[-1]

n = len(all_cars)

p_3d = 2 * (1 - stats.t.cdf(abs(car_3d / (np.std([c[min(offset+1, min_len-1)] for c in all_cars]) / np.sqrt(n) + 1e-10)), df=n-1))

p_30d = 2 * (1 - stats.t.cdf(abs(car_30d / (np.std([c[min(offset+30, min_len-1)] for c in all_cars]) / np.sqrt(n) + 1e-10)), df=n-1))

return EventStudyResult(

car=mean_car,

t_stats=t_stats,

avg_car_3d=car_3d,

avg_car_30d=car_30d,

avg_car_60d=car_60d,

p_value_3d=p_3d,

p_value_30d=p_30d,

n_events=n,

)

def backtest_llm_signals(

llm_signals: pd.DataFrame, # [ticker, date, sentiment_score]

returns: pd.DataFrame,

market_returns: pd.Series,

):

"""Backtest: long top-quintile signals, short bottom-quintile."""

llm_signals['quintile'] = pd.qcut(

llm_signals['sentiment_score'], 5, labels=[1, 2, 3, 4, 5]

)

long_events = llm_signals[llm_signals['quintile'] == 5].copy()

short_events = llm_signals[llm_signals['quintile'] == 1].copy()

long_result = run_event_study(returns, market_returns, long_events)

short_result = run_event_study(returns, market_returns, short_events)

print(f"LONG portfolio (top quintile LLM sentiment):")

print(f" CAR[0,+3]: {long_result.avg_car_3d:+.2%} (p={long_result.p_value_3d:.4f})")

print(f" CAR[0,+30]: {long_result.avg_car_30d:+.2%} (p={long_result.p_value_30d:.4f})")

print(f" N events: {long_result.n_events}")

print(f"\nSHORT portfolio (bottom quintile LLM sentiment):")

print(f" CAR[0,+3]: {short_result.avg_car_3d:+.2%} (p={short_result.p_value_3d:.4f})")

print(f" CAR[0,+30]: {short_result.avg_car_30d:+.2%} (p={short_result.p_value_30d:.4f})")

print(f" N events: {short_result.n_events}")

ls_3d = long_result.avg_car_3d - short_result.avg_car_3d

ls_30d = long_result.avg_car_30d - short_result.avg_car_30d

print(f"\nLONG-SHORT spread:")

print(f" CAR[0,+3]: {ls_3d:+.2%}")

print(f" CAR[0,+30]: {ls_30d:+.2%}")

What We Expect to See

Based on existing research, realistic CARs for LLM signals:

| Window | Long portfolio | Short portfolio | L/S spread |

|---|---|---|---|

| [0, +1] | +0.8% — +1.5% | -0.5% — -1.2% | 1.3% — 2.7% |

| [0, +30] | +1.5% — +3.0% | -1.0% — -2.5% | 2.5% — 5.5% |

| [0, +60] | +2.0% — +4.0% | -1.5% — -3.5% | 3.5% — 7.5% |

The key indicator is statistical significance. With p < 0.01 and N > 200 events, you can speak of a robust signal. With p > 0.05 — it could be noise.

Production Implementation: From Jupyter to Prod

Real-time Pipeline Architecture

YouTube/Audio Stream

│

▼

┌─────────────────┐ ┌──────────────────┐

│ Whisper │───▶│ Transcript │

│ Transcription │ │ Buffer │

│ (streaming) │ │ (Redis Stream) │

└─────────────────┘ └──────────────────┘

│

┌─────────┴─────────┐

▼ ▼

┌──────────────┐ ┌──────────────┐

│ Real-time │ │ Full-call │

│ Chunk Anal. │ │ Analysis │

│ (every 5min) │ │ (after call │

│ │ │ ends) │

└──────────────┘ └──────────────┘

│ │

▼ ▼

┌──────────────────────────────┐

│ Signal Aggregator │

│ (confidence-weighted merge) │

└──────────────────────────────┘

│

▼

┌──────────────────────────────┐

│ Trading Engine │

│ (position sizing, risk mgmt)│

└──────────────────────────────┘

Cost Analysis: How Much Does One Earnings Call Cost

Let's break down the economics of processing a single earnings call in production:

| Component | Cost | Latency |

|---|---|---|

| Whisper API transcription (60 min) | $0.36 | ~17 sec (Turbo) |

| GPT-4o structured extraction | $0.15-0.30 | ~8-15 sec |

| GPT-4o real-time chunk analysis (x12) | $1.80-3.60 | ~5 sec each |

| Embedding for RAG storage | $0.01 | <1 sec |

| Total (full pipeline) | $2.30-4.30 | ~30 sec full |

Wait, the estimate was $30-50 per call. Where do those numbers come from? It depends on the model and approach:

- Budget option (GPT-4o-mini, single pass): $0.50-1.00

- Standard option (GPT-4o, structured extraction + chunk analysis): $2-5

- Premium option (GPT-4o, multiple passes, cross-validation, historical comparison): $15-30

- Hedge-fund grade (multiple models + human review + real-time streaming): $30-50+

For a quant fund trading 500 tickers, the cost of processing an earnings season (~2,000 calls over 6 weeks) is 10,000 with the standard option. Given an average alpha per position of 1-3% — the ROI is astronomical.

Latency: The Race for Milliseconds

In the world of HFT, latency is everything. But for earnings-based strategies, the situation is different:

- An earnings call lasts 45-60 minutes — you have time

- PEAD stretches over 60 days — no need to enter in the first second

- The main dislocation happens in the first 30 minutes after the call ends

The optimal strategy is two-phase:

- Phase 1 (real-time): analyze 5-minute chunks during the call, forming a preliminary signal

- Phase 2 (post-call): full analysis of the entire transcript 2-5 minutes after it ends

Phase 1 provides a 5-10 minute edge over market participants who wait for the call to end. For mid-cap stocks, this is enough.

Expanding to Crypto: DeFi Governance and DAO Proposals

The crypto market is an ideal testing ground for LLM alpha mining. Here's why:

- Fewer institutional players — meaning more inefficiency to exploit

- Governance = earnings call — DAO decisions directly affect tokenomics

- 24/7 market — you can trade the reaction immediately

- Public data — all proposals and votes are on-chain

Types of Crypto Events for Analysis

Governance Proposals (Aave, Compound, Uniswap)

A proposal changes protocol parameters — interest rates, collateral factors, fee switches. An LLM can evaluate the economic impact:

crypto_analysis_prompt = """Analyze this DeFi governance proposal.

Extract:

1. Economic impact on token holders (positive/negative/neutral)

2. TVL impact estimate (increase/decrease/stable + magnitude)

3. Competitive positioning vs other protocols

4. Risk factors introduced by the proposal

5. Historical precedent (similar proposals in other protocols)

6. Likely voting outcome based on forum discussion sentiment

Proposal: {proposal_text}

Forum discussion: {discussion_text}

"""

Protocol Update Announcements

When Uniswap announces v4 with hooks, or Aave launches GHO — that's the equivalent of a product launch in TradFi. An LLM can assess narrative momentum and technical significance.

Treasury Reports

Large DAOs have treasuries worth hundreds of millions. Quarterly treasury reports are a direct analog of earnings. Runway, burn rate, diversification — all amenable to LLM analysis.

Specifics of Crypto Signals

Unlike TradFi, in crypto:

- On-chain data confirms or contradicts the narrative — you can cross-reference what's said on governance calls with actual protocol metrics (TVL, volume, active users)

- Whale wallets as insider trading — large wallet movements after governance discussions often precede voting

- Sentiment amplification through CT (Crypto Twitter) — a signal from a governance call can be amplified or suppressed by Twitter narratives

Pitfalls and Limitations

Hallucinations: When the Model Invents Numbers

An LLM can "extract" guidance that wasn't in the transcript. This is especially dangerous when analyzing hedge word density: the model may count more or fewer words than actually exist.

Solution: two-phase verification. The LLM extracts, deterministic code verifies. For hedge words — regex counting in parallel with LLM assessment. Deviation > 20% — flag for manual review.

import re

HEDGE_WORDS = [

r'\bapproximately\b', r'\bpotentially\b', r'\bsubject to\b',

r'\bmay\b', r'\bmight\b', r'\bcould\b', r'\buncertain\b',

r'\bchallenging\b', r'\bheadwinds\b', r'\bnavigate\b',

r'\bprudent\b', r'\bcautious\b', r'\bevolving\b',

r'\bdynamic\b', r'\bunprecedented\b', r'\btransitional\b',

]

def verify_hedge_count(text: str, llm_count: int) -> dict:

"""Deterministic verification of LLM hedge word count."""

regex_count = sum(

len(re.findall(pattern, text, re.IGNORECASE))

for pattern in HEDGE_WORDS

)

deviation = abs(llm_count - regex_count) / (regex_count + 1)

return {

"llm_count": llm_count,

"regex_count": regex_count,

"deviation": deviation,

"needs_review": deviation > 0.2,

}

Context Window Limitations

Even 128K tokens may not be enough if you want to feed:

- Current transcript (~10K tokens)

- Previous transcript for comparison (~10K)

- Analyst consensus forecasts (~2K)

- Few-shot examples (~3K)

- System prompt (~1K)

Total ~26K — we fit. But if you add a 10-K filing (~80-120K tokens) for context — you're already on the edge. Solution: RAG for retrieving relevant chunks from long documents.

Bias and Systematic Errors

LLMs are trained on historical data where certain phrases were associated with certain outcomes. But the market adapts:

- If everyone starts counting hedge words with GPT-4, managers will change their language

- The model may overweight the significance of patterns from training data (survivorship bias)

- Corporate language evolves: "synergies" in 2010 meant one thing, in 2026 — something else

Crowded Trade Risk

If 50 quant funds use the same GPT-4 to analyze the same transcripts — the signal degrades. An analogy: when everyone started trading PEAD on numerical surprises, the anomaly shrank. The same will happen with textual signals, but with a delay:

- Now (2026) — few systematically use LLMs for earnings calls. Alpha is significant

- In 2-3 years — widespread adoption, alpha decreases

- In 5 years — basic LLM signals become a commodity, edge persists only in custom models and unique data

This is the standard alpha signal lifecycle. Enjoy it while it lasts.

Instead of a Conclusion: An Action Plan

If you want to start using LLMs for earnings call analysis, here's a minimum viable plan:

- Start with free data — SEC EDGAR + EdgarTools for 8-K/10-Q filings

- Use structured extraction — Pydantic schemas via OpenAI Structured Outputs

- Backtest via event study — CAR on historical data, minimum 200 events

- Add few-shot examples — 5-10 labeled examples radically improve quality

- Verify deterministically — LLM extracts, regex verifies, human audits

- Start with mid-cap — more alpha, less competition with major funds

- Expand to crypto — governance calls and DAO proposals as uncharted territory

Remember the cardinal rule of quantitative analysis: if a signal sounds too good to be true — check again. LLMs create an illusion of understanding, but behind it lies statistical pattern matching. A powerful tool — but a tool, not an oracle.

Bibliography

-

Ball, R., Brown, P. (1968). An Empirical Evaluation of Accounting Income Numbers. Journal of Accounting Research, 6(2), 159-178. — First discovery of PEAD.

-

Bernard, V.L., Thomas, J.K. (1989). Post-Earnings-Announcement Drift: Delayed Price Response or Risk Premium? Journal of Accounting Research, 27, 1-36. — Canonical PEAD paper.

-

Loughran, T., McDonald, B. (2011). When Is a Liability Not a Liability? Textual Analysis, Dictionaries, and 10-Ks. Journal of Finance, 66(1), 35-65. — Financial sentiment dictionary.

-

Araci, D. (2019). FinBERT: Financial Sentiment Analysis with Pre-trained Language Models. arXiv:1908.10063. — BERT for financial NLP, +14pp over SOTA.

-

Wu, S. et al. (2023). BloombergGPT: A Large Language Model for Finance. arXiv:2303.17564. — Bloomberg's 50B-parameter model.

-

Yang, H. et al. (2023). FinGPT: Open-Source Financial Large Language Models. arXiv:2306.06031. — Open-source alternative to BloombergGPT, 89% accuracy.

-

Lopez-Lira, A., Tang, Y. (2023). Can ChatGPT Forecast Stock Price Movements? Return Predictability and Large Language Models. arXiv:2304.07619. — GPT-4 predicts returns with ~90% hit rate.

-

Meursault, V., Liang, P.J., Routledge, B., Scanlon, M.M. (2023). PEAD.txt: Post-Earnings-Announcement Drift Using Text. Journal of Financial and Quantitative Analysis. — Text-based PEAD is twice as large as numerical PEAD.

-

Fatouros, G. et al. (2024). Can Large Language Models Beat Wall Street? Evaluating GPT-4's Impact on Financial Decision-Making with MarketSenseAI. Neural Computing and Applications. — GPT-4 framework with 10-30% excess alpha on S&P 100.

-

Chen, Y. et al. (2025). GPT-Signal: Generative AI for Semi-automated Feature Engineering in the Alpha Research Process. arXiv:2410.18448. — Automatic generation of trading signals via LLM.

-

Zhang, X. et al. (2025). Can LLMs Hit Moving Targets? Tracking Evolving Signals in Corporate Disclosures. arXiv:2510.03195. — Detection of "moving targets" in corporate disclosures.

-

Chen, Z. et al. (2025). Large Language Models in Equity Markets: Applications, Techniques, and Insights. Frontiers in Artificial Intelligence. — Survey of 84 LLM studies in finance.

This article is educational in nature and does not constitute investment advice. Any trading strategies described here require thorough backtesting and risk management before deployment with real capital.

MarketMaker.cc Team

Miqdoriy tadqiqotlar va strategiya

Read More

The Black-Scholes Formula: Option Mathematics and the Holy Grail of Trading

ZigBolt: Why We Built Our Own Aeron in Zig and Hit 20 Nanoseconds Per Message